Age Estimation by Multi-scale Convolutional Network

We conduct the experiments on MORPH

Album 2 database, which is the largest aging database we know. For equal

comparison and reproducible research, we supply the detailed evaluation

protocols and 21 facial landmarks for all face images.

1. Database and Evaluation Protocols

MORPH Album 2 [1] contains about 55,000 face images of more than 13,000 subjects. The capture time spans from 2003 to 2007. Age ranges from 16 to 77 years. Although it is a good and large database, the distributions of gender and ethnicity are uneven. The Male-Female ratio is about 5.5 : 1 and the White-Black ratio is about 4 : 1. Except for White and Black, the proportion of other ethnicity is very low (4%).

To

use the database effectively, we follow the previous way [2] to pre-processing

the database and split it into three non-overlapped subsets S1, S2 and S3 randomly.

First, all images in MORPH are processed by a face detector. Because MORPH

contains some non-face images (e.g., tattoo), they are removed from the

database after this step. The number of face images in the processed database

is 55244. Then the facial landmarks of face images are localized by ASM and

local aligned patches are cropped based on the landmarks described in Section

3.1. We construct the S1, S2 and S3 subsets by two rules: 1) Making Male-Female

ratio to 3; 2) Making White-Black ratio to 1.

The information of the subsets

is shown in Table 1. In all experiments, the training and testing are repeated

in two times: 1) training on S1, testing on S2+S3 and 2) training on S2, testing on S1 + S3. The performance of the two experiments and their

average are reported. For age and gender estimation, all images in Table 1 are

used. For ethnicity classification, the images in “Other” column are neglected.

Table 1. The information of

the pre-processed MORPH Album 2 and S1, S2, S3 subsets.

|

|

Black |

White |

Other |

||||

|

Male |

S1: 4012 |

S2: 4012 |

S3: 28835 |

S1: 4012 |

S2: 4012 |

S3: 0 |

S3: 1845 |

|

Female |

S1: 1305 |

S2: 1305 |

S3: 3166 |

S1: 1305 |

S2: 1305 |

S3: 0 |

S3: 130 |

The file list of

Exp1: S1, S1 Test (S2 + S3) and

Exp2: S2, S2 Test (S1 + S2) can be

downloaded here.

The format of the list file is:

[filename] [age] [ethnicity] [gender],

where

[age] is between 16 and 77,

[ethnicity] is 0 for Black, 1 for

White, -1 for others

[gender] is 0 for Male, 1 for Female

Some sample lines are shown as follows,

Album2/009055_0M54.jpg 54 1 0

Album2/019066_08M70. jpg 70 0 0

Album2/262418_01F20. jpg 20 0 1

Album2/261887_00M26. jpg 26 -1 0

Album2/268066_00F36. jpg 36 -1 1

…

Note (2015-8-28): After the publication of this work, we found that some face images in S2 and S2_Test are overlapped, therefore we re-generate a test set "S2_Test_Fixed" to fix this problem. If you want compare to our paper, you should use S2_Test. If you want evaluate the performance more objective, you should use S2_Test_Fixed. We feel very sorry for our mistake. Many thank Alberto Escalante (alberto.escalante@ini.rub.de) for pointing out this problem.

2. Facial Landmarks

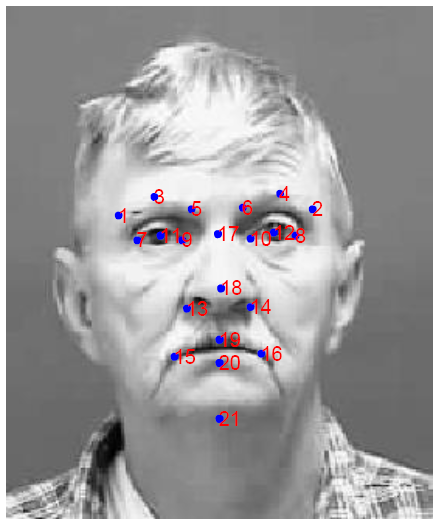

In our paper, we use 21 facial landmarks to crop aligned patches from face images. The positions of the landmarks are shown in Fig. 1.

Figure 1. The 21 facial landmarks used in the experiments.

The facial landmarks can be downloaded here. The landmarks are detected by a multi-view ASM automatically. Maybe there are some errors in the coordinates, but we just leave them there to test the robustness of our convolutional network.

The

landmarks for each face image are save to an independent text file, e.g., the

landmarks of “009055_0M54.jpg”

is in “009055_0M54.jpg.shape21”. The format of “shape21” file is:

x1, y1

x2, y2

x3, y3

…

x21, y21

3. Publication

The article based on this evaluation protocols has been published in ACCV 2014. Please cite the following paper in any published work if you use the protocols and data.

4. Reference

[1] Rawls, A., Ricanek, K.: Morph: Development and

optimization of a longitudinal age progression database. In: COST 2101/2102

Conference. (2009) 17-24.

[2] Guo, G., Mu, G.: Human age estimation: What is

the influence across race and gender? In: Computer Vision and Pattern

Recognition Workshops (CVPRW), 2010 IEEE Computer Society Conference on. (2010)

71-78.