|

Many efforts have been made in recent years to tackle the unconstrained face recognition challenge. For the benchmark of this challenge, the Labeled Faces in the Wild (LFW) [2] database has been widely used. However, the standard LFW protocol is very limited:

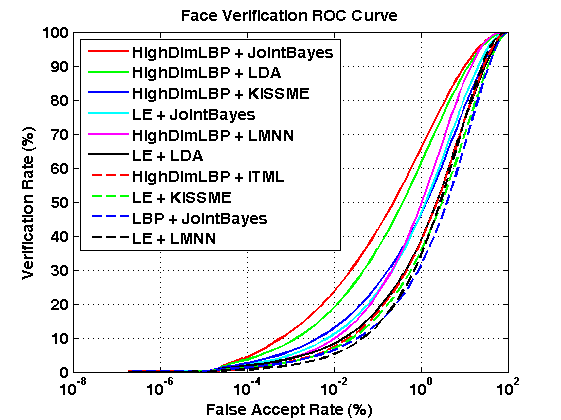

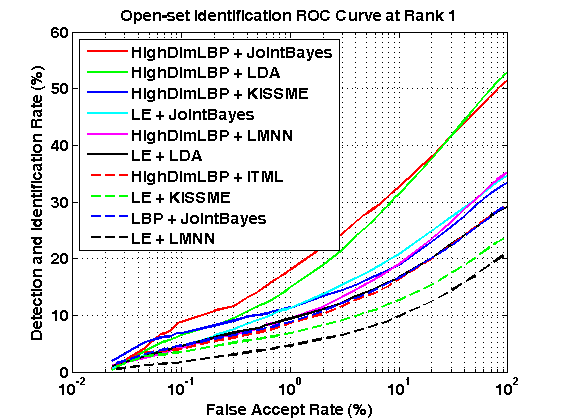

Thereby we develop a new benchmark protocol to fully exploit all the 13,233 LFW face images for large-scale unconstrained face recognition evaluation. The new benchmark protocol, called BLUFR, contains both verification and open-set identification scenarios, with a focus at low FARs. There are 10 trials of experiments, with each trial containing about 156,915 genuine matching scores and 46,960,863 impostor matching scores on average for performance evaluation. We provide a benchmark tool here to further advance research in this field. For more information, please read our IJCB paper and the README files in the benchmark tookit. |

||||||||||||||||||||||||||||||||||||||||||||||

|

Shengcai Liao, scliao@nlpr.ia.ac.cn National Laboratory of Pattern Recognition, Institute of Automation, Chinese Academy of Sciences. |

||||||||||||||||||||||||||||||||||||||||||||||

Notes: (1) Algorithms are ranked by VR @FAR=0.1%. (2) Performances are measured in (μ - σ) of 10 trials. (3) The citations indicate where the results are from. Download the result files and demo code for performance plot: Results.zip Please contribute your algorithm's performance so that we can keep a track of the state of the art for large-scale unconstrained face recognition. |

||||||||||||||||||||||||||||||||||||||||||||||

|

[1] Shengcai Liao, Zhen Lei, Dong Yi, Stan Z. Li, "A Benchmark Study of Large-scale Unconstrained Face Recognition." In IAPR/IEEE International Joint Conference on Biometrics, Sep. 29 - Oct. 2, Clearwater, Florida, USA, 2014. [pdf] [slides] [2] G. B. Huang, M. Ramesh, T. Berg, and E. Learned-Miller. Labeled faces in the wild: A database for studying face recognition in unconstrained environments. Technical Report 07-49, University of Massachusetts, Amherst, October 2007. |

||||||||||||||||||||||||||||||||||||||||||||||

| Last updated: Jul. 31, 2014 |